By Meredith Alexander Kunz, Adobe Research

New work by Adobe Research is a step forward in predicting the future.

Intelligent agents that use computer vision—such as self-driving cars or robots—could perform better if they knew what would happen next. Predicting movement or objects in the next frames of a video feed could potentially save lives or simply increase accuracy across many tasks.

Researchers have recently explored this topic on two levels—semantics (the objects in the video) and motion dynamics (how things move). A new investigation by Adobe Research scientists and university colleagues uses deep learning to unite these two kinds of predictions into a single whole—providing much more powerful results.

The work, presented in a paper at the Conference on Neural Information Processing Systems (NIPS), is the result of a collaboration between National University of Singapore scholars and Adobe Research’s Xiaohui Shen, senior research scientist, Jimei Yang, research scientist, and Zhe Lin, principal scientist.

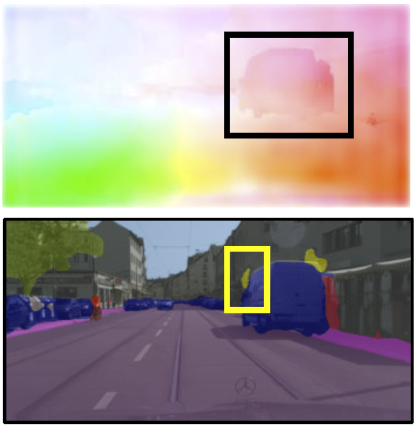

Shen and his collaborators created two systems tapping into convolutional neural networks and trained them on a public video dataset of city street scenes. One of the systems accepts an input of the images of the previous four frames of a video and creates an output of predicted motions in the fifth (future) frame. A second system accepts an input of semantics from four previous frames, including labeled objects such as busses, cars, people, roads, street lights, sky, etc. Its output is the next frame’s predicted objects.

Here’s the novel element: In-between the two systems is a bridge, or “transform layer,” where the two networks communicate and inform each other. This way, the system in charge of semantics can learn something about how items are moving through a scene, and the movement system can be informed by the semantic data.

The logic behind this is intuitive. “If you know the motion of a group of pixels, it can help tell you what the object is,” says Shen. “Likewise, if you know about a scene’s semantics—that a specific object is a car or person instead of a road or street sign—you know more about what those objects’ motion will be. Our contribution is to connect these two kinds of information to help the network learn to predict objects in the scene and motion of those objects together.”

Results were a significant improvement over current state-of-the-art predictions that rely on just one of these elements. “By fusing motion and semantics, you get a better outcome than you would if you looked at them separately,” says Shen.

Though this work is still early-stage, the approach could be a boon for those developing self-driving vehicles, and it has many other potential uses. Researchers imagine augmented or virtual reality applications, for instance, where future scene prediction could alleviate lags in real-time video displays.

These images show the prediction results (for motion and for objects) obtained from the team’s neural networks. These results are clearer and more accurate than the previous state-of-the-art.

Contributors:

Xiaohui Shen, Jimei Yang, and Zhe Lin, Adobe Research

Xiaojie Jin, Huaxin Xiao, Jiashi Feng, and Shuicheng Yan, National University of Singapore

Zequn Jie, Tencent AI Lab